Download the White Paper PDF

Get the complete AI-Accelerated Computer Forensics technical white paper as a printable PDF — all 7 sections, component comparisons, and recommended configurations.

Abstract

Computer forensic examinations are entering an inflection point. Though dedicated AI-embedded forensic software remains nascent, the underlying hardware decisions made today will determine which agencies and laboratories can take advantage of machine-learning-assisted triage, anomaly detection, and automated artifact correlation as those tools mature. This paper evaluates the most relevant current processor and GPU architectures — AMD Ryzen 9 9950X3D, 9950X3D2, Threadripper 9960X and 9970X, and Intel Core Ultra 9 285K against NVIDIA RTX 5090, RTX PRO 6000 Blackwell, and RTX 6000 Ada — and identifies the combinations that deliver the best forensic throughput per dollar, the most capable premium configuration, and the practical sweet spot for real-world deployment. Memory architecture — specifically the performance cost of ECC RAM versus high-speed desktop DDR5 — is examined as a decisive, and often underappreciated, variable in overall system throughput.

The Case for AI-Ready Forensic Hardware

Computer forensic examiners have long been at the mercy of processing throughput. Imaging a 4TB NVMe drive, ingesting a cloud warrant return, hashing thousands of artifacts, and running keyword searches across petabytes of data are all fundamentally compute-bound operations. The examiner waits; the machine works.

Artificial intelligence is beginning to change what the machine can do during that wait. Tools such as Magnet AXIOM, Cellebrite UFED, and X-Ways Forensics have begun embedding ML-assisted classification, facial detection triage, and language-model-based reporting into their workflows. Griffeye Analyze already uses GPU-accelerated neural networks to classify image content at scale. The common thread: every one of these accelerated functions offloads work from the CPU to a GPU or to large, fast caches that keep active datasets off the memory bus.

The forensic workstation of 2026 is not merely a fast computer — it is an inference endpoint. And the hardware choices made at purchase time determine how capable that endpoint will be when the software catches up with the hardware.

BitMindz, through its RCKTBX product line, has been designing purpose-built forensic hardware for law enforcement, military, and government agencies since its founding. The configurations explored in this paper represent the engineering thinking already informing the next generation of RCKTBX platforms.

Processor Landscape

The processor is the orchestrator. In forensic workloads, it manages I/O queues from multiple simultaneous NVMe devices, runs hash algorithms across large buffers, coordinates GPU offloads, and handles the recursive directory traversal that underpins nearly every examination. Cache depth and memory bandwidth are the two metrics that matter most for this class of work.

AMD Ryzen 9 9950X3D2 — The Sweet Spot

Released April 22, 2026, the 9950X3D2 is the definitive forensic AI processor for the AM5 platform. By stacking 3D V-Cache beneath both CCDs rather than one, it brings 192 MB of total L3 cache — a 50% increase over the 9950X3D — while eliminating the asymmetry that left the second CCD operating at a cache disadvantage.

The practical result: every one of its 16 cores now enjoys uniform, low-latency access to the full shared cache pool. For forensic workloads, where hash tables, directory indexes, and file-carving buffers all compete for L3 residency, this is a meaningful architectural improvement.

In AI inference benchmarks — the metric most relevant to next-generation forensic software — the 9950X3D2 outperforms the 9950X3D by 24% with int8 instructions and 18.6% with FP32 on ResNet50. In general multi-threaded PassMark rankings, it sits at #19 overall among all desktop CPUs. At a few hundred dollars more than the 9950X3D, it delivers a disproportionate performance return for forensic AI workloads.

AMD Ryzen 9 9950X3D — Strong Value Runner-Up

The original 9950X3D (128 MB L3, single V-Cache CCD, $699) remains a formidable forensic processor and a compelling choice for budget-constrained deployments. It ranks #21 fastest among all desktop CPUs in multi-threaded PassMark and outperforms the Intel Core Ultra 9 285K by approximately 3% in multi-threaded workloads.

For agencies not yet running AI-embedded forensic tools, it is an excellent platform. For those planning to leverage ML-assisted triage, classification, or inference as those tools mature, the 9950X3D2's cache architecture and inference advantage make the incremental cost straightforward to justify.

AMD Threadripper 9960X and 9970X — HEDT Throughput

The Threadripper 9960X (24 cores, $1,500) and 9970X (32 cores, $2,500) inhabit a different class entirely. These HEDT platforms support quad-channel DDR5, up to 128 PCIe lanes, and substantially higher RAM capacity ceilings — making them the correct choice when a forensic workstation must simultaneously image multiple large targets, run a virtual machine environment, and serve as a networked examination node.

The 9970X, in multi-threaded benchmarks, runs approximately 85% faster than the 9950X3D in Blender and comparable CPU-bound parallelizable tasks. For imaging pipelines and bulk hash verification across terabytes, that advantage is measurable.

The tradeoff: TRX50 platforms are significantly more expensive ($800–$2,000 for the motherboard alone), the power envelope is higher, and ECC-registered RDIMM memory introduces the latency penalty described in Section 4.

Intel Core Ultra 9 285K — The Competitive Reference Point

Intel's Arrow Lake flagship is a competent platform and a legitimate choice where vendor familiarity or software certification requirements favor it. In productivity benchmarks, it trails the 9950X3D meaningfully, and the LGA 1851 platform does not offer the cache depth of AMD's X3D parts. It remains relevant for mixed workloads and where Intel's QuickSync video acceleration is useful, but it is not the optimal primary forensic processor for AI-augmented pipelines in 2026.

16C / 32T · Dual V-Cache CCDs

192 MB L3 (both CCDs) · Zen 5

+24% AI inference vs. 9950X3D

AM5 · DDR5 · Released Apr 2026

16C / 32T · 4.3 / 5.7 GHz

128 MB L3 · Single V-Cache CCD

DDR5-5600 · PCIe 5.0 · AM5

MSRP $699

24C / 48T · Zen 5

Quad-channel DDR5 · 128 PCIe lanes

TRX50 platform

MSRP $1,500

32C / 64T · Zen 5

Quad-channel DDR5 · 128 PCIe lanes

85% faster than 9950X3D (MT)

MSRP $2,500

GPU Architecture for Forensic AI

Graphics processing units have become the primary execution engine for any machine learning inference task embedded in forensic software. Tensor cores handle the matrix math underlying neural network operations; CUDA cores handle the parallel SHA-256 and MD5 hash operations that remain the backbone of evidence integrity verification; and VRAM capacity determines how large a model — or how many simultaneous inference batches — can be held resident without spilling to system memory.

It is important to note the distinction between the forensic AI use case and the image/video generation workloads that dominate GPU marketing. Forensic AI tasks — document classification, facial detection triage, keyword spotting in encrypted containers, timeline anomaly scoring — are inference-dominated, not training-dominated. They favor fast tensor throughput, large VRAM for keeping models resident, and stable, certified driver stacks over raw rasterization frame rates.

NVIDIA GeForce RTX 5090 — Raw Performance Leader

The RTX 5090 represents the apex of the Blackwell consumer architecture: 21,760 CUDA cores, 32 GB of GDDR7 memory, and a 575W TDP that demands a 1,200W+ power supply in a fully loaded workstation. Its fifth-generation Tensor Cores deliver excellent inference throughput and its PCIe 5.0 interface ensures minimal CPU-to-GPU transfer latency when feeding large forensic datasets.

For most current and near-term forensic AI workloads — those fitting within 32 GB of VRAM — the RTX 5090 is the fastest single GPU available and represents exceptional value relative to the professional tier.

NVIDIA RTX PRO 6000 Blackwell — Professional Precision

The RTX PRO 6000 Blackwell is the most capable desktop workstation GPU ever produced. It carries 24,064 CUDA cores (an 11% increase over the RTX 5090), 96 GB of ECC GDDR7 memory, NVLink 5 support for multi-GPU configurations, and a stable professional driver stack certified for enterprise workloads. Its 300W TDP — despite triple the VRAM — makes multi-GPU deployments practical in a standard workstation chassis.

The RTX PRO 6000 is the correct choice when forensic AI workflows scale to large language models for document analysis, when multiple concurrent examination jobs must remain GPU-resident simultaneously, or when 24/7 operational reliability is non-negotiable. Its $8,000–$11,000 price point reflects its professional positioning.

NVIDIA RTX 6000 Ada — The Established Workhorse

For agencies with existing Ada-generation workstations, the RTX 6000 Ada (48 GB GDDR6, 18,176 CUDA cores) remains a capable forensic AI accelerator. It predates the Blackwell generation by approximately two years, but its VRAM capacity and certified driver stack make it a competent platform for current forensic AI tools. Agencies considering new purchases should note that Blackwell GPUs have benchmarked 2.5× faster than Ada for AI training and inference tasks.

21,760 CUDA cores · Blackwell

32 GB GDDR7 · 1,792 GB/s BW

575W TDP · PCIe 5.0 x16

MSRP ~$4,000

24,064 CUDA cores · Blackwell

96 GB ECC GDDR7 · NVLink 5

300W TDP · Enterprise drivers

MSRP $8,000–$11,000

Memory Architecture: The Overlooked Variable

Of all the decisions in a forensic workstation build, memory architecture is the most consequential and least discussed. The conventional assumption in enterprise hardware is that ECC (Error-Correcting Code) memory is superior because it detects and corrects single-bit errors — preventing silent data corruption in long-running operations. In a database server or financial transaction processor, where a flipped bit can corrupt a critical record without any visible indication, that argument is sound. In a forensic examination environment, it deserves significantly more scrutiny.

Computer forensics is, at its core, a binary discipline. The data either exists on the target media or it does not. A recoverable file is there or it isn't. An email, a photograph, a chat log — these artifacts are either present and intact or they are absent. The nature of forensic evidence is not precision arithmetic or financial calculation where a single altered bit produces a silently wrong answer. Forensic tools read and interpret data structures; if a bit were flipped in transit through RAM, the resulting artifact would be visibly corrupt or unreadable — not silently incorrect. The examiner sees it, flags it, and moves on.

High-Speed Desktop DDR5

ECC Registered RDIMM

ECC registered memory typically operates at 4800–5200 MT/s with higher latency timings, where high-speed consumer DDR5 kits operate at 6000–7200 MT/s with tighter timings. The bandwidth differential — roughly 15–30% — is not academic. The AMD Ryzen 9 9950X3D's Infinity Fabric is tuned to run synchronously with memory speed. At DDR5-6000, the processor runs at its designed peak. At DDR5-4800, both bandwidth and fabric latency degrade, directly slowing the CPU-side forensic operations that depend on moving large buffers: imaging, hashing, file carving, and any AI inference that requires frequent CPU-to-GPU data handoff.

Key Finding

Computer forensics is a binary discipline — artifacts are either present or absent, intact or visibly corrupt. The silent bit-flip scenario that ECC is designed to prevent does not translate to a forensic evidence risk the way it does in financial or scientific computing. For AM5 forensic workstations (9950X3D2), high-speed non-ECC DDR5 (DDR5-6000 to DDR5-7200) delivers materially better throughput than ECC memory with no meaningful evidentiary downside. Modern DRAM's on-die ECC already mitigates the primary single-bit error concern at the hardware level.

It is worth acknowledging directly that the ECC debate is a legitimate one, and informed engineers hold strong opinions on both sides. This paper does not dismiss that position. ECC memory has a clear and important role in computing environments where a single flipped bit can produce a silently incorrect result with real-world consequences — genomic sequencing, pharmaceutical modeling, financial risk calculations, or long-duration scientific simulations where one corrupted value can propagate invisibly through an entire dataset. In those contexts, ECC is not optional; it is foundational.

The forensic computing industry, however, has reached a different and well-established consensus through decades of practical experience. BitMindz builds forensic workstations with high-speed non-ECC RAM. So do our competitors. This is not an oversight or a cost-cutting measure — it reflects a considered understanding of what forensic workloads actually demand. Law enforcement and government forensic laboratories have relied on non-ECC systems to produce court-admissible evidence for years, without ECC being raised as a methodological vulnerability in any validated examination framework. The argument for ECC in forensics is theoretically defensible; the argument against it is practically proven.

The ECC argument remains valid — and the correct choice — for Threadripper HEDT and workstation-class platforms where maximum RAM capacity (256 GB+) is required and where the platform natively demands registered DIMMs. For the AM5-based sweet spot configuration, it is a performance liability.

Storage and Motherboard Considerations

NVMe Storage

Forensic workstations require storage in two distinct roles: the acquisition target interface (where speed directly affects imaging throughput) and the examination working volume (where random read performance determines how quickly tools can traverse directory structures and load artifacts). PCIe 5.0 NVMe drives — Samsung 9100 Pro, WD Black SN850X on Gen 5 platforms — deliver sequential read speeds exceeding 14 GB/s, enabling single-drive imaging of a 2 TB target in under three minutes.

For the working volume, a RAID-0 array of two PCIe 5.0 NVMe drives can approach 25+ GB/s — effectively eliminating storage as a bottleneck for all current forensic software operations. BitMindz RCKTBX configurations standardize on a minimum of two NVMe slots on PCIe 5.0 lanes for forensic-grade storage performance, with additional M.2 or U.2 slots available for overflow volumes and OS isolation.

Motherboard Platform Selection

For the AM5 sweet spot: X870E boards (ASUS ROG Crosshair X870E, MSI MEG X870E ACE) provide full PCIe 5.0 GPU and NVMe lane allocation, DDR5 support up to DDR5-8000+ with XMP/EXPO, and sufficient USB4 and Thunderbolt connectivity for forensic hardware peripherals.

For Threadripper: TRX50 (9960X/9970X) or WRX90 (Pro 9000WX) boards are the only compatible options — budget $800–$2,000 for the platform itself.

Recommended Configurations

Three tiers address the practical range of forensic computing requirements in 2026. Prices below reflect retail component costs and do not include assembly, validation, or the forensic tool licensing that BitMindz integrates into its RCKTBX platform.

| CPU | AMD Ryzen 9 9950X3D2 (16C/32T · 192 MB L3 · +24% AI inference vs. 9950X3D) |

| GPU | NVIDIA GeForce RTX 5090 (32 GB GDDR7 · ~$4,000) |

| Memory | 192 GB DDR5-6000 (6×32 GB) · high-speed XMP — no ECC (~$2,000) |

| Storage | 2× PCIe 5.0 NVMe 2 TB (OS + working volume) + 2× acquisition bays |

| Motherboard | ASUS ROG Crosshair X870E Hero or MSI MEG X870E ACE |

| Power | 1,200W 80+ Platinum PSU |

This configuration represents the intersection of maximum forensic AI acceleration and practical cost. The 9950X3D2's 192 MB of L3 cache — distributed symmetrically across both CCDs — keeps active forensic datasets fully in-chip for all 16 cores simultaneously. Hash verification loops, file-carving buffers, and in-memory directory indexes all benefit from uniform cache access with no cross-CCD latency penalty. Its 24% AI inference advantage over the 9950X3D (int8, ResNet50) is the single most compelling hardware argument for forensic AI readiness at the AM5 price point. The RTX 5090 provides sufficient VRAM for any current forensic AI inference task and delivers the fastest single-GPU tensor throughput available. DDR5-6000 keeps the Infinity Fabric at its design frequency. This is the RCKTBX configuration BitMindz recommends for most agency deployments.

| CPU | AMD Ryzen 9 9950X3D2 (16C/32T · Dual V-Cache) |

| GPU | NVIDIA RTX PRO 6000 Blackwell (96 GB ECC GDDR7 · $8,000–$11,000) |

| Memory | 128 GB DDR5-6400 · high-speed XMP — no ECC |

| Storage | 2× PCIe 5.0 NVMe 4 TB + dedicated acquisition NVMe array |

| Motherboard | ASUS ROG Crosshair X870E Extreme or equivalent |

| Power | 1,000W 80+ Titanium PSU (PRO 6000 draws 300W max) |

The RTX PRO 6000 Blackwell's 96 GB of ECC GDDR7 enables simultaneous residency of multiple large AI models — allowing a forensic platform to run document classification, image triage, and language-model artifact analysis concurrently without swapping GPU memory. NVLink 5 support enables a second PRO 6000 to produce 192 GB of combined VRAM in a dual-card deployment, making this platform forward-compatible with substantially larger forensic AI models as the software ecosystem matures. Note: GPU-side ECC (essential for AI model integrity under extended runtime) is separated from system RAM ECC; desktop DDR5 remains correct for the system memory role here.

| CPU | AMD Threadripper 9970X (32C/64T · $2,500) or 9960X (24C/48T · $1,500) |

| GPU | NVIDIA GeForce RTX 5090 (single) or RTX PRO 6000 Blackwell |

| Memory | 128–256 GB DDR5 Quad-Channel (RDIMM ECC at this capacity) |

| Storage | 4× PCIe 5.0 NVMe + U.2 array for high-volume storage |

| Motherboard | TRX50 platform (ASUS Pro WS TRX50-E SAGE or equivalent) |

| Power | 1,600W redundant PSU recommended |

When a laboratory must simultaneously image multiple high-capacity targets, run a virtual machine environment for live forensics, and maintain an AI inference engine for real-time triage, the Threadripper's 32 cores and quad-channel memory bus justify its cost. The 9970X runs 85% faster in fully parallelizable workloads versus the 9950X3D. The performance cost of RDIMM ECC memory (mandatory at 128 GB+ on the Threadripper platform) is partially offset by the quad-channel bandwidth advantage. This platform is appropriate for regional forensic laboratories, federal agency nodes, and multi-examiner environments where simultaneous heavy workloads are the norm.

Conclusion

The forensic computing industry stands at an inflection point. AI-embedded tools are arriving faster than most agencies' procurement cycles can accommodate, and the hardware decisions made in 2026 will determine which examiners can absorb those capabilities and which will be waiting on hardware refresh cycles to catch up.

The evidence presented in this paper supports a clear hierarchy of recommendations. The AMD Ryzen 9 9950X3D2 paired with a GeForce RTX 5090, high-speed DDR5-6000, and PCIe 5.0 NVMe storage is the optimal configuration for the broadest range of forensic deployments: it delivers maximum per-dollar AI inference throughput, eliminates the memory bandwidth penalty of ECC RAM, and provides a platform that can absorb current and near-term forensic AI software without further hardware investment. The RTX PRO 6000 Blackwell represents the premium ceiling for agencies with the budget and the workload to justify it. Threadripper platforms fill the niche where raw parallelism and extreme RAM capacity take precedence over per-core efficiency.

The argument for ECC system memory is valid in server and workstation contexts where RAM capacity requirements exceed what desktop DDR5 can provide. For AM5 forensic workstations, it is a performance trade-off without a meaningful evidence integrity benefit — modern DRAM's on-die ECC handles the single-bit error concern, and the 15–30% memory bandwidth reduction is felt on every operation the examiner performs.

BitMindz is already engineering the configurations described in this paper. The RCKTBX line of forensic workstations, laptops, servers, and mobile kits is purpose-built for law enforcement, military, and government agencies — with hardware combinations selected specifically for forensic workflow performance, not gaming benchmarks or rendering pipelines.

As AI-embedded forensic software matures, the RCKTBX platform is designed to be the endpoint that captures that capability at launch, not at the next hardware refresh. BitMindz manufactures the machine the software is waiting for.

BitMindz · Irvine, California · CAGE 9RNC8 · SAM.gov Active · bitmindz.com

Download the Complete White Paper

Enter your email and we'll instantly open the complete printable PDF — all 7 sections, processor and GPU comparisons, RAM analysis, and recommended RCKTBX configurations.

Related AI-Ready Hardware

Related Articles

BitMindz: The Leader in AI-Driven Forensic Hardware

Why BitMindz builds AI-optimized forensic infrastructure with GPU acceleration and scalable architecture.

Best Forensic Workstation for Nuix 2026

Why the RCKTBX X6na and X9na are the definitive processing engines for Nuix software.

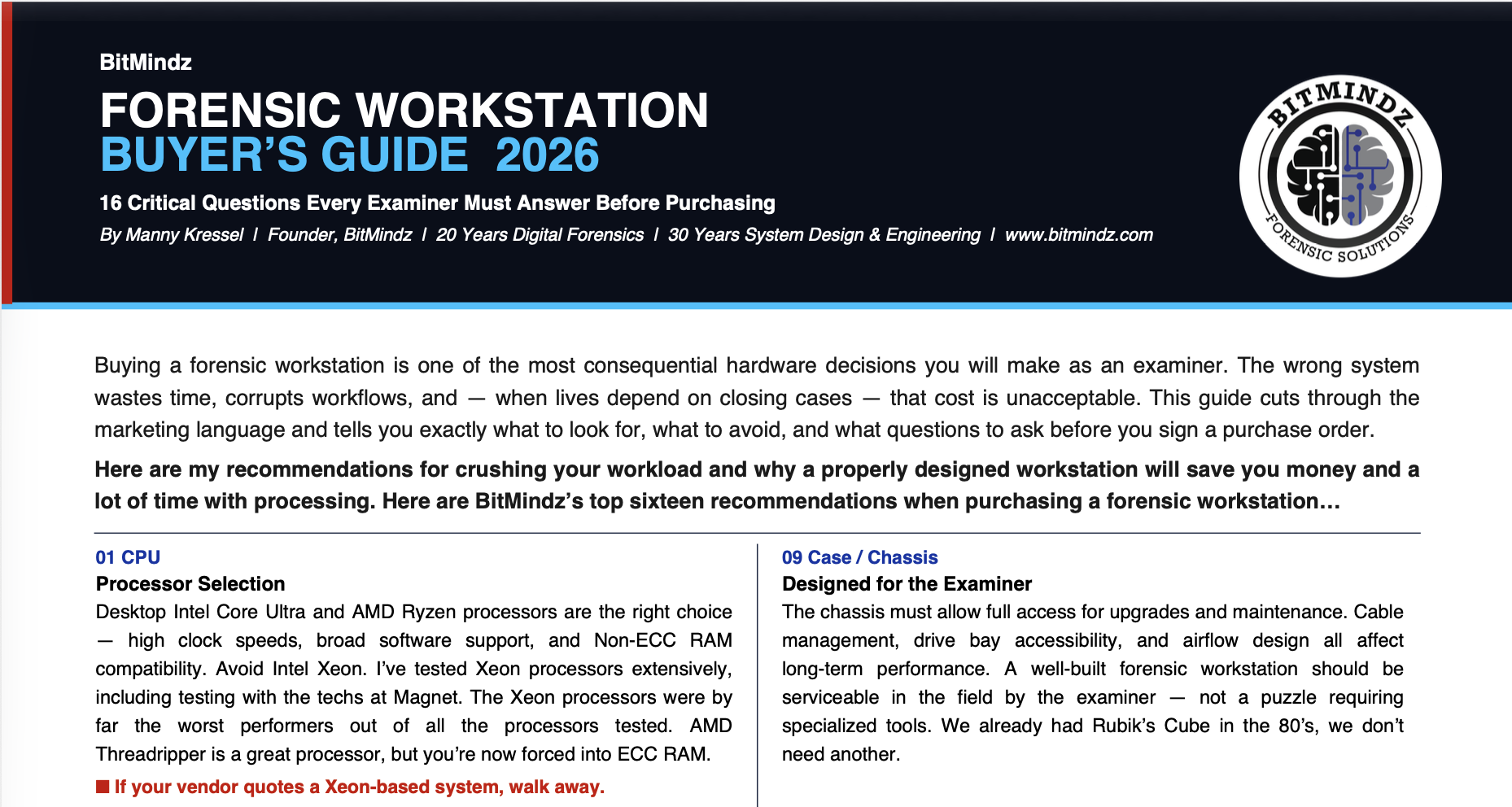

Forensic Workstation Buyer's Guide 2026

16 things to know before you purchase — from an actual forensic computer manufacturer.